Scientists Hack Into Fish Brain, Observe Thoughts Swimming

What do thoughts look like? Though that sort of question might sound like something posed late one night in a college dormitory, neuroscientists are keenly interested in being able to see signals traveling throughout the brain in real time.

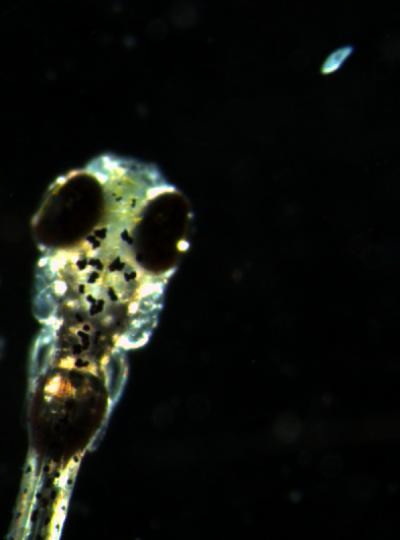

Now Japanese scientists have scored a key breakthrough, using technology to watch as a thought "swims" through the brain of a living zebrafish. They reported the finding in a paper published online on Thursday in the journal Current Biology.

“Our work is the first to show brain activities in real time in an intact animal during that animal's natural behavior," senior author Koichi Kawakami, a researcher at Japan's National Institute of Genetics, said in a statement Thursday. "We can make the invisible visible; that's what is most important."

The researchers used genetic techniques to insert a special fluorescent probe right into the neurons of the fish. When the fish saw a tasty paramecium, various pathways in the fish’s brain lit up, allowing the researchers to correlate specific brain activities to different behaviors.

A zebrafish’s brain is primitive, but its basic design does not differ too much from our own. The researchers of the current study implied that they’re interested in exploring more complex behaviors.

"In the future, we can interpret an animal's behavior, including learning and memory, fear, joy or anger, based on the activity of particular combinations of neurons," Kawakami said.

If the technique eventually makes its way into humans, researchers could potentially use this kind of specific brain activity reading to develop better psychiatric drugs.

"This has the potential to shorten the long processes for the development of new psychiatric medications," Kawakami says.

This latest thought visualization experiment is just one of the many recent advances in neuroscience that have ushered in an era where hacking the brain is becoming increasingly plausible.

In 2010, University of Utah scientists unveiled a technique that uses sensors attached directly to the brain to translate signals into speech -- a technique that could prove especially useful to patients rendered mute by paralysis.

A computer hooked up to the sensors was able to correctly identify what word that an epileptic man was thinking between 76 percent and 90 percent of the time. In the initial experiments, the resarchers only focused on a few essential words like "yes," "no," "hungry," "thirsty," "hot," "cold," "more" and "less."

"Even if we can just get them 30 or 40 words, that could really give them so much better quality of life," author Bradley Greger told the Telegraph in 2010.

SOURCE: Muto et al. “Real-Time Visualization of Neuronal Activity during Perception.” Current Biology published online 31 January 2013.

© Copyright IBTimes 2025. All rights reserved.