New System Teaches Robots To Make Sushi?

Robots are very useful. They are used in industrial applications, manufacturing, and assembly. Now, a new system will teach them to do something more refined. Something like molding sticky rice into that edible little thing called sushi.

A group of MIT researchers, namely Yunzhu Li, Jiajun Wu, Russ Tedrake, Joshua B. Tenenbaum, and Antonio Torralba, have developed a system that improves a robot’s ability to mold certain materials into a variety of shapes, Engadget reported.

The system, known as “learning-based particle simulator,” allows a robot to learn and predict how certain materials will respond when it touches them. While this isn’t the first time researchers have tried enabling robots to grab delicate objects, this is the first time researchers tried this kind of approach.

Unlike previous learning systems that had robots relying heavily on approximation techniques that usually fail in real-life applications, this new system allows robots to learn how small portions of items -- called “particles” -- respond when touched. This helps refine the robot’s control of certain materials.

The group demonstrated how this system works using a two-finger robot aptly named “RiceGrip.” The robot was tasked to mold deformable foam, which served as proxy for sticky sushi rice, into different shapes.

Using a depth camera and object recognition, RiceGrip was able to mold the foam accurately. It accurately predicted how the foam will respond, and adjusted itself accordingly.

Although RiceGrip was successful in molding deformable foam, it failed to mold real rice as the sticky material slipped in one of the attempts. Still, it’s success in molding the foam material looks promising.

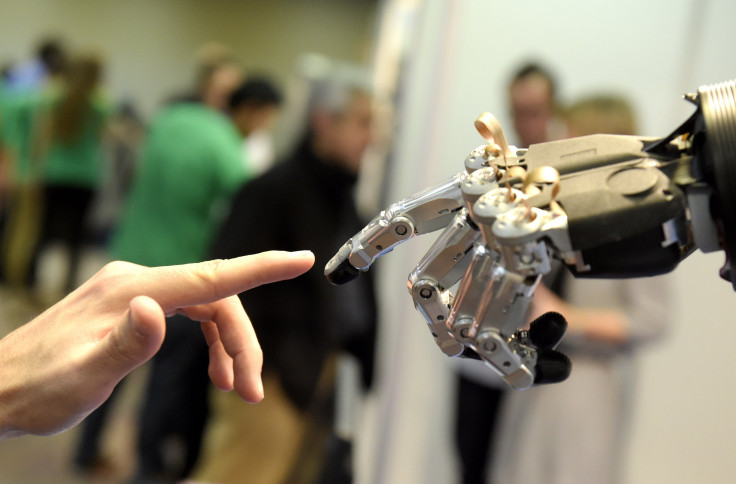

The researchers designed the system to allow robots to manipulate items in a way similar to how human hands do. This system, group member Jiajun Wu said in a press release, was inspired by a 5-month-old’s ability to recognize the difference between solid and liquid materials. Wu’s colleague agrees:

“Humans have an intuitive physics model in our heads, where we can imagine how an object will behave if we push or squeeze it,” the group’s first author, Yunzhu Li, said. “Based on this intuitive model, humans can accomplish amazing manipulation tasks that are far beyond the reach of current robots.”

“We want to build this type of intuitive model for robots to enable them to do what humans can do,” Li added.

The system is still in its early days, and the researchers are still working on a few things to improve how the system works. Their success will lead to a breakthrough in the area of robotics. And although the future is still far off, this system makes imagining a robot preparing a serving of sushi a lot easier.

© Copyright IBTimes 2025. All rights reserved.