Tesla Under Fresh Scrutiny Over Assisted Driving Features

The US highway safety watchdog has pushed Tesla for details about its driver-assistance systems, specifically whether it has barred some people testing the features from reporting possible safety concerns.

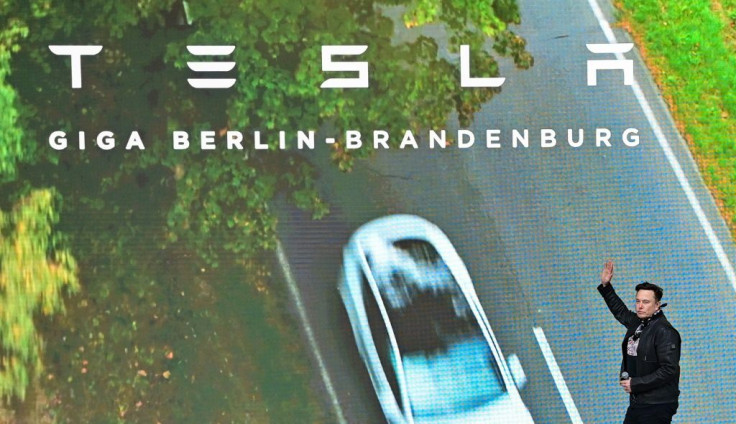

As part of a preliminary probe opened after a series of accidents with emergency vehicles, the regulator on Tuesday ordered Elon Musk's electric car company to provide information about confidentiality agreements with drivers who have been testing a new feature since October 2020.

The feature, called Full Self-Driving (FSD), is designed to allow the cars to detect stop signs and turn at intersections, while the existing Autopilot function is mainly used to manage speed and keep the vehicle in a lane.

The National Highway Traffic and Safety Administration (NHTSA) cited reports saying the confidentiality agreements "allegedly limit the participants from sharing information about FSD that portrays the feature negatively."

The agency relies in part on driver-reported feedback to assess potential safety defects.

"Any agreement that may prevent or dissuade participants in the early access beta release program from reporting safety concerns to NHTSA is unacceptable," the agency wrote in a letter to Tesla, setting a November 1 deadline for the company to respond.

In a separate letter, NHTSA asked Tesla to explain why it has not initiated a recall of vehicles after updating its driver assistance software to improve the detection of emergency vehicle lights at night.

Manufacturers are obliged to recall vehicles once they have identified defects related to safety, NHTSA said.

The agency also asked how the company picked the drivers who early this month began testing a new version of its self-driving system, nicknamed FSD Beta 10.2.

Musk announced on Twitter on Monday that this version was being rolled out to drivers the company considers the safest.

The Autopilot system has been the subject of controversy after a series of accidents involving the electric vehicles.

Tesla's move to test beta versions of new assistance features in real-world conditions with ordinary drivers but without seeking official permission is further fueling the controversy.

© Copyright AFP {{Year}}. All rights reserved.