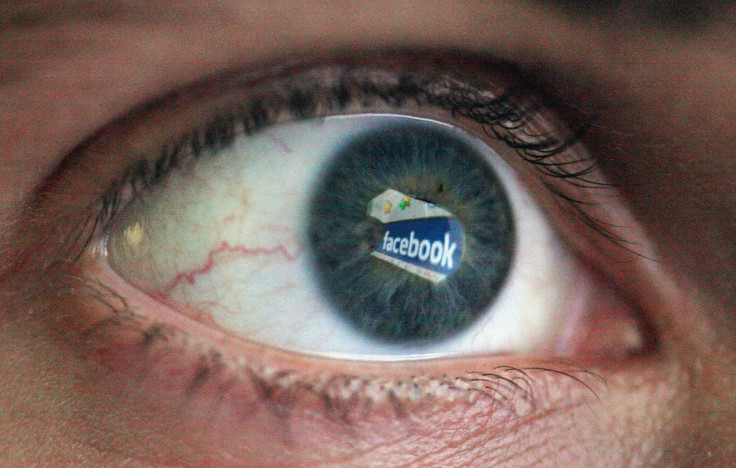

Facebook's Housing Rental Ads Can Be Hidden From Black And Latino Users

Facebook allows housing advertisers to target their ads so that they can be hidden from users of a certain race, according to a new investigation by ProPublica. Facebook had previously vowed to better regulate advertisements that could be seen as discriminatory, but the investigation shows that Facebook’s enforcement doesn’t always work.

ProPublica bought dozens of fake rental ads on Facebook, and specified that they not be shown to users in certain categories, such as Spanish speakers, African Americans and people interested in Islam. The ads violated not only Facebook’s own rules, but they also go against the Fair Housing Act. The law prohibits housing ads that “indicate any preference, limitation, or discrimination based on race, color, religion, sex, handicap, familial status, or national origin.” The ads were approved within in minutes.

“This was a failure in our enforcement and we’re disappointed that we fell short of our commitments,” said Ami Vora, vice president of product management at Facebook, in a statement to ProPublica. “The rental housing ads purchased by ProPublica should have but did not trigger the extra review and certifications we put in place due to a technical failure.”

If not denied outright, the ads should have at least triggered Facebook’s “self-certify” protocol, which prompts users to assert that their ads are in compliance with anti-discrimination laws.

Rachel Goodman, an attorney with the Amercian Civil Liberties Union's Racial Justice Program provided a statement to International Business Times.

“While we appreciate that Facebook continues to express a desire to get it right on this important civil rights issue, this story highlights the need for greater transparency and accountability. Had outside researchers been able to see and the system Facebook created to catch these ads, those researchers could have spotted this problem and ended the mechanism for discrimination sooner,” said Goodman. “We’re very, very disappointed to see these significant failures in Facebook’s system for identifying and preventing discrimination in advertisements for rental housing.”

ProPublica produced a similar investigation last year, in which they showed housing ads could be bought that target a white-only audience. In February, Facebook introduced new measures that they said would prohibit discriminatory ads.

“Over the past several months, we’ve met with policymakers and civil rights leaders to gather feedback about ways to improve our enforcement while preserving the beneficial uses of our advertising tools,” said Facebook in a press release earlier this year. “We heard concerns that discriminatory advertising can wrongfully deprive people of opportunities and experiences, particularly in the areas of housing, employment and credit, where certain groups historically have faced discrimination.”

Facebook’s ads also came under scrutiny this year because of Congressional investigations into Russian influence on the 2016 presidential election. Facebook testified that Russian ad buys meant to influence voters could have reached the eyes of as many as 126 million Americans.

Facebook is increasingly a place where businesses are going to attract consumers. Last year Facebook took in over 19 percent of all online ad revenue, according to the Interactive Advertising Bureau, netting $14.1 billion. Facebook ad spending accounted for 40 percent of the overall increase in ad revenue between 2015 and 2016.

UPDATE: Wednesday, Nov. 22, 2017 at 12:26 p.m. EST: Facebook's Vora provided a longer statement about ProPublica's reporting to International Business Times in response to this article.

“This was a failure in our enforcement and we’re disappointed that we fell short of our commitments. Earlier this year, we added additional safeguards to protect against the abuse of our multicultural affinity tools to facilitate discrimination in housing, credit and employment. The rental housing ads purchased by ProPublica should have but did not trigger the extra review and certifications we put in place due to a technical failure. Our safeguards, including additional human reviewers and machine learning systems have successfully flagged millions of ads and their effectiveness has improved over time. Tens of thousands of advertisers have confirmed compliance with our tighter restrictions, including that they follow all applicable laws. We don’t want Facebook to be used for discrimination and will continue to strengthen our policies, hire more ad reviewers, and refine machine learning tools to help detect violations. Our systems continue to improve but we can do better. While we currently require compliance notifications of advertisers that seek to place ads for housing, employment, and credit opportunities, we will extend this requirement to ALL advertisers who choose to exclude some users from seeing their ads on Facebook to also confirm their compliance with our anti-discrimination policies – and the law.” -Ami Vora, VP Product Management, Facebook

© Copyright IBTimes 2025. All rights reserved.