Nvidia Wants To Power Self-Driving Cars With AI Supercomputers And Deep Neural Nets

LAS VEGAS -- Self-driving or autonomous cars are going to be the big story at the Consumer Electronics Show (CES) this week and to kick things off Nvidia has announced what it is calling the world's first in-car supercomputer which will help allow car manufacturers to handle the enormous processing challenge presented by autonomous vehicles.

Nvidia's Drive PX2 is an update on last year's original, which also promised to change the way self-driving cars processed information. While last year's model featured two of Nvidia's then cutting-edge Tegra X1 central processing unit (CPU), the 2016 model features 12 graphics processing units (GPUs) and is equivalent to the computing power of 12 Apple MacBook Pro laptops -- all in the size of a lunchbox.

Drive PX2, which will first be tested by Volvo in a number of its self-driving cars, will allow vehicles to process some of the huge amount of images it received from cameras mounted on the cars in real time, something competing platforms are unable to do.

However, Drive PX2 is just one part of Nvidia's "end-to-end" solution for self-driving cars. The other main strand of the solution is a deep neural net platform that will allow each manufacturer deploying it to create and manage its own deep neural net -- letting them better recognize objects a car encounters, including vehicles, pedestrians, lights and road signs.

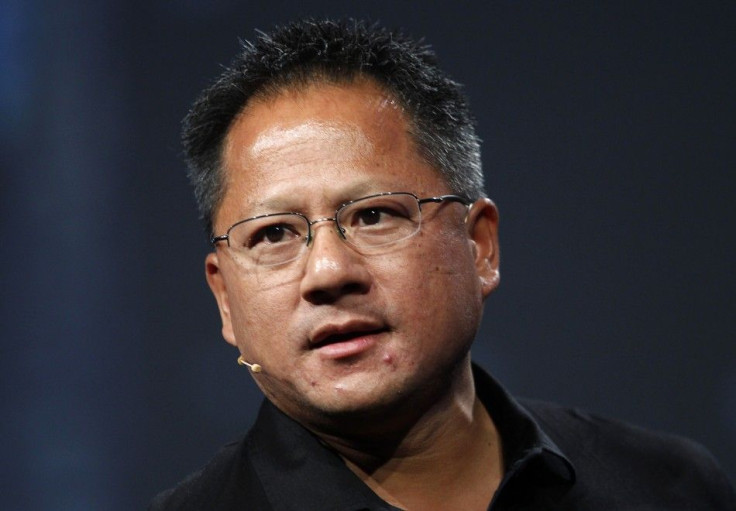

“Self-driving cars will revolutionize society and our vision is to create a computing platform which will allow the entire automotive industry realize this vision,” Nvidia CEO Jen-Hsun Huang said during the company's CES news conference Monday evening.

Deep neural networks are an artificial intelligence technique that will allow manufacturers to train their vehicles to identify objects without having to write any lines of code. The system works by simply presenting a series of thousands of images to the network so that it can learn to recognize them.

Huang, in a typically technical and rambling presentation, told the audience that getting self-driving cars to work was hard and that his company had spent several thousand hours working on the problem over the last year. In tests of Nvidia's own system the company said that in the space of six months it had managed to get object recognition levels from 39 percent to 88 percent, just shy of the industry leading standard -- though as Huang noted, the big difference is that Nvidia's system works in real time.

Companies like Ford, Mercedes-Benz and Japanese self-driving taxi company ZMP are all using the system to develop their respective self-driving cars. Nvidia is also working to help leverage the power of all the major artificial intelligence frameworks in use today, including Google's Tensorflow, Facebook's Big Sur and IBM's Watson by providing GPU acceleration through its Kuda GPUs, allowing for operations that would typically take years to be completed in months or even weeks.

© Copyright IBTimes 2025. All rights reserved.