Artificial Intelligence: Google’s DeepMind Creates Neural Network That Can ‘Logically Reason’ Its Way Around London Underground

Artificial neural networks — systems patterned after the arrangement and operation of neurons in the human brain — excel at tasks that require pattern recognition, but are woefully limited when it comes to carrying out instructions that require basic logic and reasoning. This is a problem for scientists working toward the creation of Artificial Intelligence (AI) systems capable of performing complex tasks with minimal human supervision.

In a step toward overcoming this hurdle, researchers at Google’s DeepMind — the company that developed the Go-playing computer program AlphaGo — announced earlier this week the creation of a neural network that can not only learn, but can also use data stored in its memory to “logically reason” and make inferences to answer questions.

DeepMind’s new system — called a Differentiable Neural Computer (DNC) — combines deep learning, wherein it can learn from examples and make sense of complex input it has never received before, with an external memory, which, as the DeepMind researchers Alexander Graves and Greg Wayne explain in a blog post, allows it to “store knowledge quickly and reason about it flexibly.”

In order to achieve this, the researchers first trained the neural network using randomly generated map-like structures — a process that allowed the DNC to learn how to store connections between various parts in its external memory. After this, when it was confronted with a new map, the DNC was able to provide answers that were not explicitly stated in the data set.

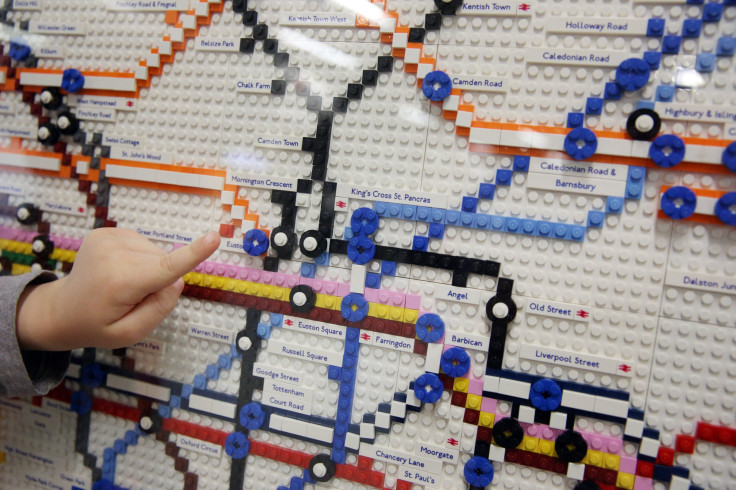

“A DNC can learn on its own to write down a description of an arbitrary graph and answer questions about it,” the researchers explained. “When we described the stations and lines of the London Underground, we could ask a DNC to answer questions like, ‘Starting at Bond street, and taking the Central line in a direction one stop, the Circle line in a direction for four stops, and the Jubilee line in a direction for two stops, at what stop do you wind up?’ Or, the DNC could plan routes given questions like ‘How do you get from Moorgate to Piccadilly Circus?”

Or take a family tree. Unlike a list, which is a relatively simple data structure, a family tree, where links between generations have to be traced back along lines connecting various levels, is much more complex. The DeepMind DNC was also able to work out connections between various family members — including questions about a given subject’s distant relatives, which required complex deductions — after being told of just a handful of parent, child and sibling relationships.

“We also found it possible to analyse how DNCs used their memories by visualising which locations in memory were being read by the controller to produce what answers,” the researchers said. “Conventional neural networks in our comparisons either could not store the information, or they could not learn to reason in a way that would generalise to new examples.”

The key milestone the creation of this neural network achieves is not what tasks it manages to perform, but rather how it performs them. Unlike a smartphone app, which can also provide the fastest route between two stations, the DNC does so without consulting a preprogrammed timetable or a set of predefined rules.

“We hope that DNCs provide both a new tool for computer science and a new metaphor for cognitive science and neuroscience: here is a learning machine that, without prior programming, can organize information into connected facts and use those facts to solve problems,” the researchers said.

© Copyright IBTimes 2024. All rights reserved.